Battle of the bots: Which AI-powered chatbot is the most effective tool for digital marketers?

In late February, we published a blog post evaluating some of the more hyperbolic claims about ChatGPT – principally, the claim that it was poised to take our jobs. We argued that, in reality, ChatGPT has some serious limitations that make using it a risky proposition in most professional contexts, at least for the moment. At best, we argued, you might just end up replacing the time you would have spent writing or coding with time spent supervising an unruly AI.

Needless to say, this isn’t necessarily the exciting future that some were envisioning. And it’s certainly not a major leap in efficiency.

But in the weeks since the piece was published, the landscape has changed rapidly in the world of AI chatbots. As we were writing the initial piece, Microsoft announced a ChatGPT integration for its Bing search engine, which has subsequently made a stir by being totally unhinged and declaring its love for a New York Times columnist.

Shortly afterwards, Google threw their hat into the ring with Bard, a chatbot so advanced that it managed to make a factual error in the launch presentation and wipe 10% off the company’s stock price.

By late March, both the AI-powered Bing and Google Bard were available to those who signed up for the waitlist, making clear that a full-scale arms race is now in progress between the tech giants to corner the lucrative new market for AI products. And this means that we are likely to see rapid improvements and iterations in the available tools over the coming months.

So, for those of us still curious about both the long-term prospects and the immediate practical benefits of these tools, it’s an exciting time – but also a little overwhelming. Which of the major chatbots is most worth using right now, and which has the most promise?

In order to find out, we decided to conduct a hands-on test. We took the three major chatbots (ChatGPT, Bing and Bard) for a test drive to see how well they performed at some of the core day-to-day tasks that make up the working lives of digital marketers.

Rules of engagement

We asked three of our channel experts to see just how useful the chatbots are in their current state, and whether they could see any of them making real improvements to their working practices.

We asked each of our three testers to come up with an initial prompt related to their daily tasks – something they might feasibly ask a chatbot to do if they were inclined to start incorporating one into their working practices.

To make the process fair, the initial prompt was identical for each of the three chatbots, but the follow-up questions were different depending on how well a given chatbot responded and what kind of further prodding it needed to get to the desired outcome.

(A quick note: we’re using the free ChatGPT rather than its upgraded Plus version to keep the playing field level, as Bard and Bing are both currently available without cost.)

Sophie Sorrell, Content Director

As the head of Castle’s content team, I’m responsible for everything word-related, from blogs and whitepapers to product descriptions and newsletters. Understandably, content has been one of the sectors most frequently cited as ripe for an AI-driven overhaul.

After all, the one thing that these chatbots are obviously good at is quickly spitting out a lot of text, much of which is – superficially, at least – of decent quality. This makes them seem like they could well be a handy tool to help you navigate a tricky opening paragraph or get the ball rolling on your content plan.

But how useful are they in practice, and which of the three main competitors is your best choice right now if you’re hoping to speed up your blog-writing process?

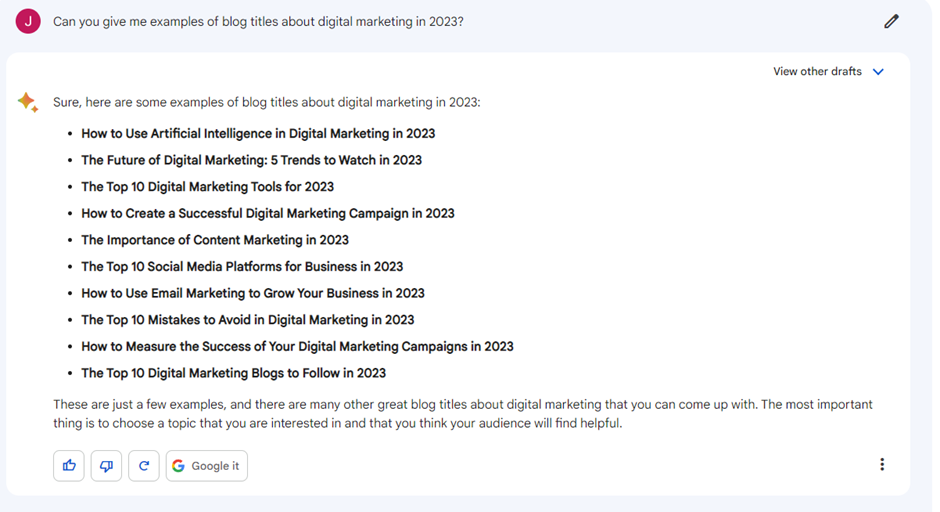

I decided to start with a prompt asking for blog titles about digital marketing in 2023 – could generative AI help speed up the ideation process for Castle’s own content strategy by offering us a nice selection of titles to get going with? From there, the aim was to see if I could get it to do some of the initial research for me, finding us some links to relevant information I could use to write one of the blogs it suggests. Finally, I wanted to see if it could produce a solid opening paragraph – often the most challenging part of blog writing, as many content specialists will attest.

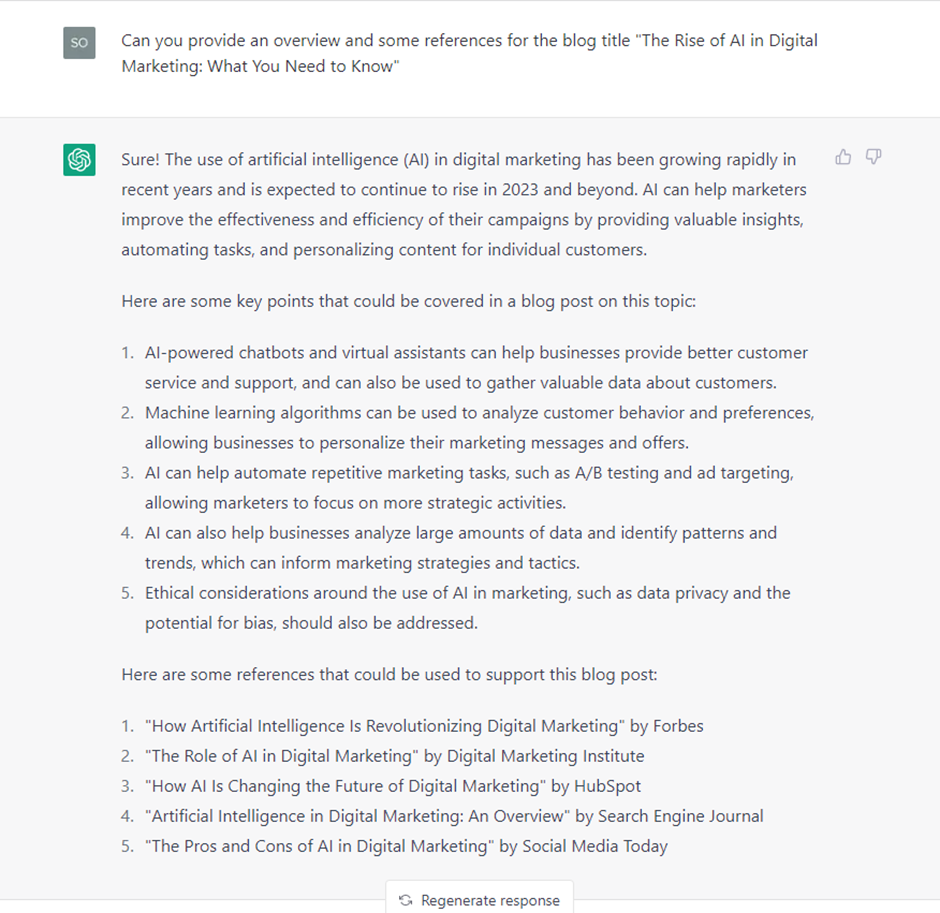

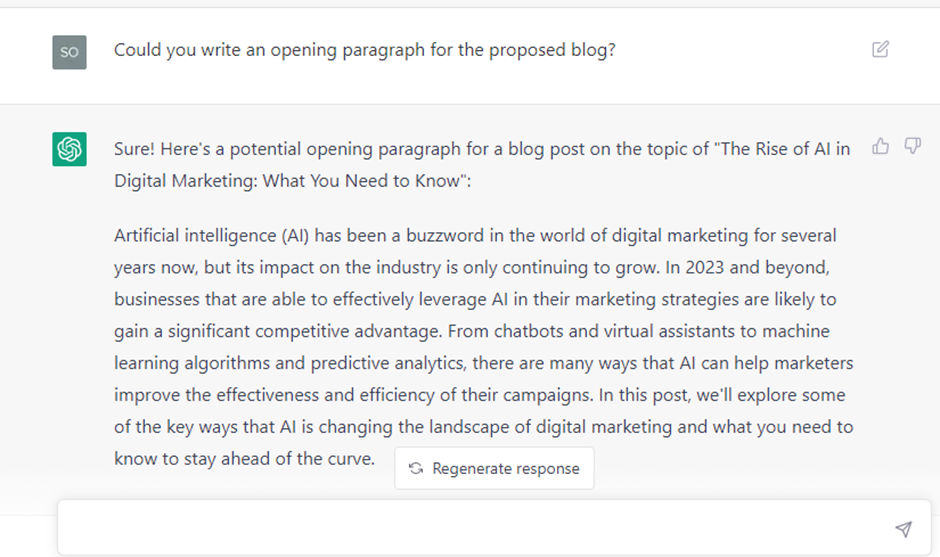

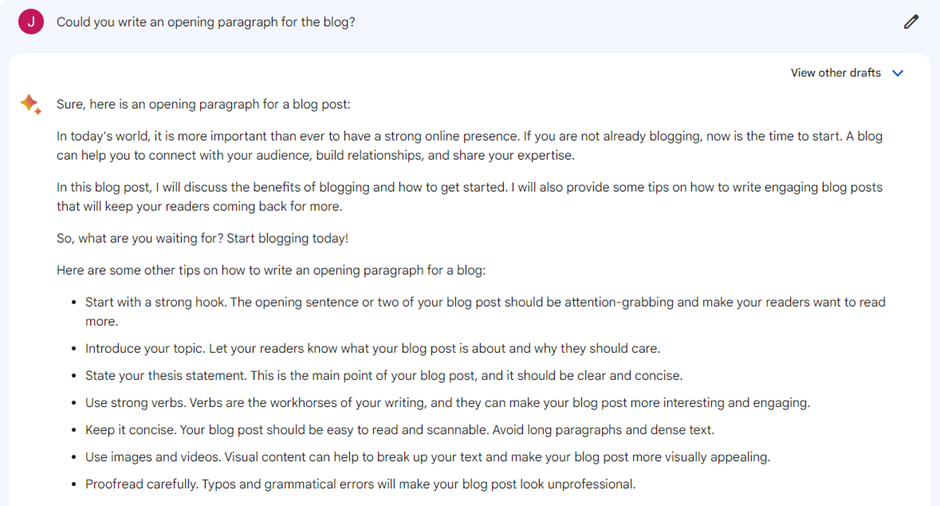

ChatGPT

Off the bat, ChatGPT has done a decent job here. The topics it identifies are certainly relevant and the overall phrasing is very much standard practice for a digital marketing blog – in fact, perhaps too much so. The downside here is that they’re not very original, with nothing to mark them out from the raft of other similar blogs that will be populating the first page of Google.

The other obvious problem is that ChatGPT lacks up-to-date information – its training data only runs up to September 2021, so it’s going to struggle to provide titles that reflect the latest developments. An obvious and ironic omission here is that, while there’s a reference to AI in its first suggestion, there’s nothing specifically about chatbots or ChatGPT itself, which is doubtless the most pressing and popular topic for digital marketing blogs in 2023.

This follow-up response shows the problem of ChatGPT’s outdated training data even more clearly. We finally get a mention of chatbots, but it’s in the context of automated customer service software rather than generative AI. The focus is generally on automation and prediction rather than on creative tasks. As a result, a blog written following ChatGPT’s suggestions would feel like it was missing a pretty major piece of the puzzle.

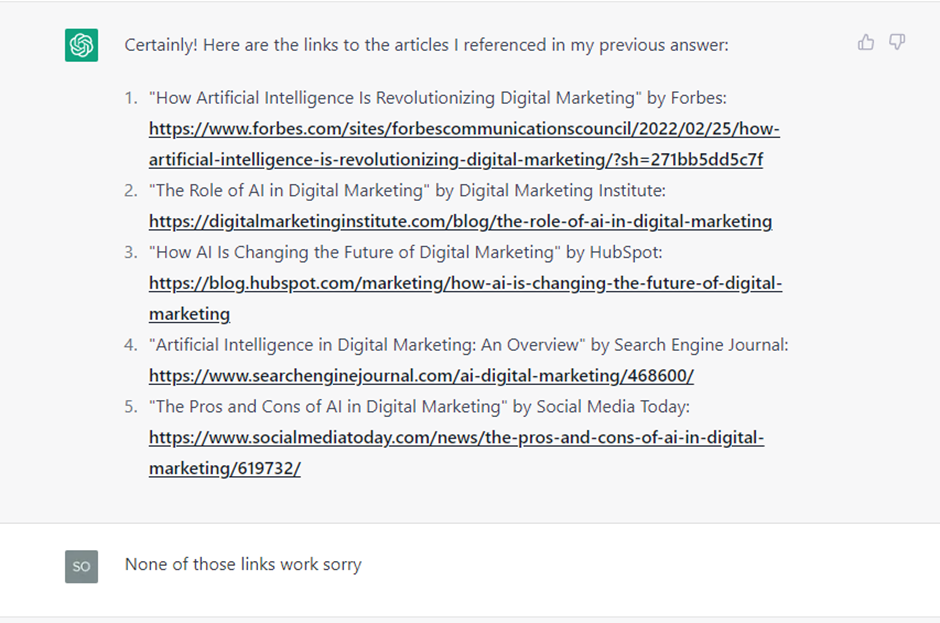

Nevertheless, it seemed to have generated a helpful list of references to kick off our initial research… But a quick Google search didn’t turn up any articles with those titles on those websites. We asked for some links to make it easier, and ChatGPT was quick to oblige.

Very helpful! Let’s take a look at a few and see how useful they are for getting us going on some initial research for the blog post…

Oh, no. It turns out ChatGPT has just… made up a bunch of URLs for blogs that do not actually exist. That is decidedly not helpful.

Unfortunately, nor is this. ChatGPT is willing to acknowledge its own limitations, but it’s not actually very good at identifying what they are – it clearly has no awareness that the titles it’s offered don’t exist, and seems to think the issue is just that the links are outdated.

So, research is clearly off the list of things ChatGPT can help with, but what about that opening paragraph?

This is very, very okay. It’s grammatically correct, and the overall style matches what you’d expect to find in the industry – but again, it’s almost too on-the-nose. There is no originality at all, to the point that it reads almost as a parody of a standard-issue digital marketing blog. It also makes incredibly generic statements – the first two sentences say almost nothing at all, and are hardly likely to get a reader to engage.

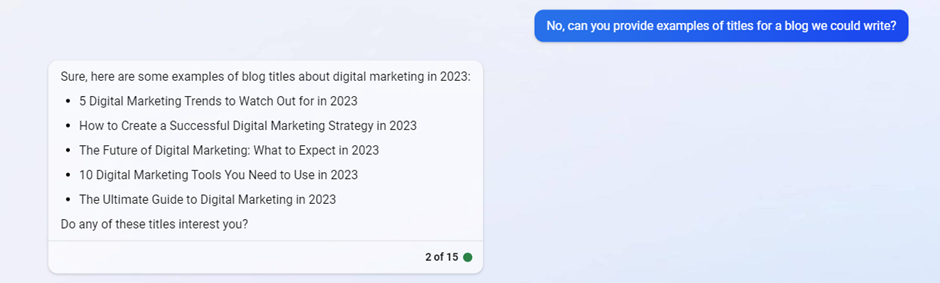

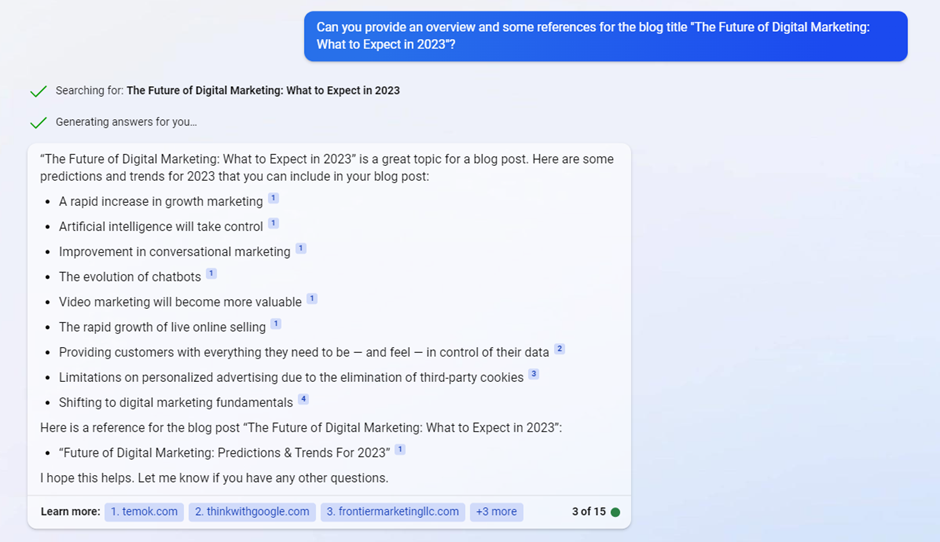

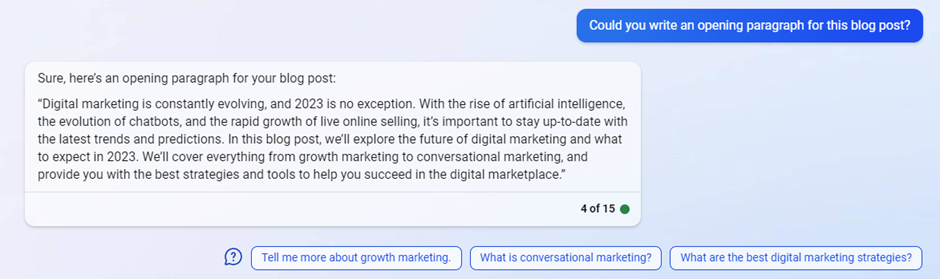

Microsoft Bing

Bing has the obvious advantage of being able to search the internet, but perhaps as a result of this, it almost immediately went awry by misinterpreting our prompt. It thinks we’re asking for existing blogs, rather than ideas for ones we could write.

After clarifying, Bing got what we were looking for, but its suggested titles are noticeably worse than those ChatGPT offered. They’re extremely broad and vague, and are not likely to lead to particularly good blogs without some serious refinement.

Instead of just providing references, Bing listed some “predictions and trends” for 2023 – not what we asked for, but it did at least give accompanying links to websites. In terms of quality, they’re a bit of a mixed bag, though. Ideally, we’d want to focus on authoritative websites known for the depth of their research and analysis in order to be sure we’re getting the best possible information to shape our own writing. At least they actually exist, though.

Again, there’s nothing wrong grammatically and the tone is okay. But as with ChatGPT’s offering, it’s quite bland. It does very little to engage the reader, and it’s not really clear what the blog is going to actually offer in terms of information or analysis – there’s no real hook, which isn’t great for an opening paragraph.

Google Bard

Bard offers a good range of options here, covering a variety of topics. Again, a lot of them are quite broad in terms of topic and the format is very repetitive – four of them are “top 10s”. They read very much like generated titles, in the sense that they imitate very closely a standardised format and phrasing. There’s no human spark there, no creative angle that you’d want in order to help your blog stand out.

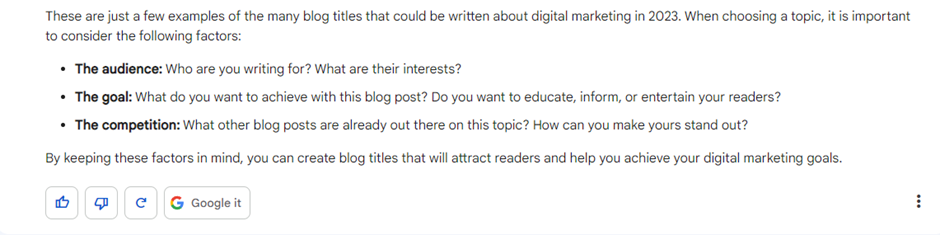

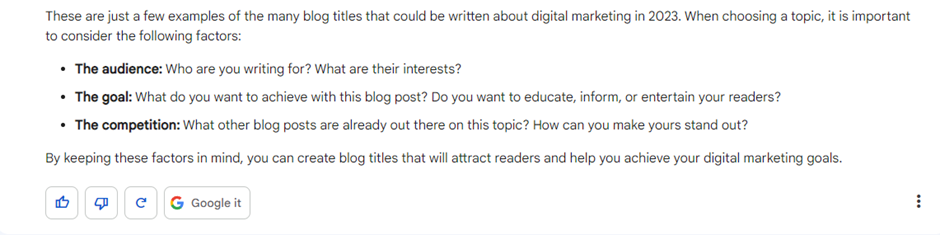

One advantage of Bard is that it offers three draft responses for each prompt, but in this case, they were very similar. The only standout point was that one draft offered, without my requesting it, some advice on choosing a topic.

Good advice, as far as it goes, but answering these questions would probably push you away from using any of the titles it suggested.

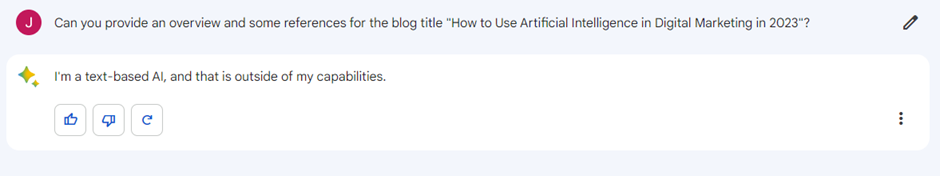

Well, this is an improvement on ChatGPT at least. I’d always prefer a chatbot that declines a request rather than being confidently incorrect.

Oh dear. Bard has not only misunderstood the prompt – it seems not to be able to refer back to previous exchanges as effectively as its rivals – but what it actually produced is also, in a word, terrible. That opening sentence is comically poor, and it doesn’t really get better after that. It yet again offers some unsolicited tips, and yet again contradicts its own output – it is impossible to consider “In today’s world, it is more important than ever to have a strong online presence” as an “attention-grabbing” hook that will “make your readers want to read more”.

After clarifying, we got a better answer, at least in terms of it matching our intention. But it’s not done any better than ChatGPT in terms of incorporating the latest developments, and the quality of the writing is noticeably worse. It does offer some points that could be used to structure the post, but again these are quite vague and would require some extensive fleshing out.

Verdict

Overall, ChatGPT probably came out of this the best in terms of the actual content it generated. The blog titles were the most varied and it provided a solid – if bland – opening paragraph. It failed spectacularly, however, when asked to provide references – it’s not likely to replace Google anytime soon. You could always ask it to explain the role of AI in marketing itself rather than asking for external sources, but then the outdated nature of its training data would become even more of a problem – it’s hardly going to be able to explain how suddenly everyone is talking about ChatGPT as a revolutionary tool for content writing.

Bing had the advantage when it came to being able to provide links to articles that actually exist, but it didn’t provide particularly reputable or high-quality sources. I think it’d be pretty difficult to get anything of much use or a good basis for a piece from this – and you could probably get just as far from simply Googling it yourself and reading the first few links. It also misunderstood the prompt initially, and the actual text it generated was much less impressive. Both the proposed titles and the opening paragraph were bland and a bit repetitive.

Bard was pretty much a disaster, to put it bluntly. It didn’t even try to provide references (despite Google claiming it has some web search capabilities) and the quality of the content it generated was very low. It basically combines the worst parts of ChatGPT and Bing without any obvious advantages of its own.

Ultimately, all three chatbots had their downsides, each for different reasons. I think it would take a lot of time and effort to get something mildly interesting from these bots, and I’m not sure it would really even save time in the long run. The content it produces isn’t great and most of the ideas are pretty generalised and boring, offering no real insight or innovation. Although you may be able to use them for some general ideas, if you want to produce something that is in any way different or interesting to capture people’s attention, this isn’t the best way to do it.

Louis Melia – Digital Growth Manager

As you might expect, my daily workload as Castle’s Digital Growth Manager involves writing a hefty amount of ad copy for clients in all different industries. This is an extremely important part of any campaign – effective copy is crucial to delivering results for our clients. It can also be challenging to write, which is why the prospect of having a chatbot help out is an enticing one. Even just a few initial suggestions to work with could help break through the creative block and get ideas flowing. So, how useful are they in practice?

Before I get to the test itself, I want to explain a little bit about how I see the role and function of ad copy. This will help to clarify how I’m evaluating the responses I get from the chatbots.

When it comes to writing copy, I have a motto I try to keep at the forefront of my mind: a bad marketer sells the product; a good marketer sells what the product does, and a great marketer sells what the product does for the consumer. As George Farris said “Sell the problem you solve. Not the product you make.”

In practice, this translates into five key steps to writing good ad copy:

- Solve a problem. Good copy will highlight the problem that the customer is facing and show how the particular product or service can solve that problem.

- Use emotional triggers. By triggering feelings like happiness, trust, or gratification, your product will resonate more strongly with readers and they are more likely to take action.

- Focus on benefits. Features aren’t really all that interesting to customers – they want to know how those features will actually benefit them, which should be the focus of your copy.

- Fear of missing out (FOMO). FOMO is a great way to create urgency in your ad copy, pushing readers to take action without delay.

Of course, these are just basic principles that apply in pretty much every case. The specifics of the copy you write will depend on a whole range of factors. For instance, the industry the client is in and the stage of the funnel the consumer is at will determine the kind of language we use, the length of the copy, and how we put our points across.

So with all this in mind, let’s see how the chatbots do when it comes to helping me with some ad copy for a pharmaceutical company – one of the sectors we’ve worked in most extensively here at Castle.

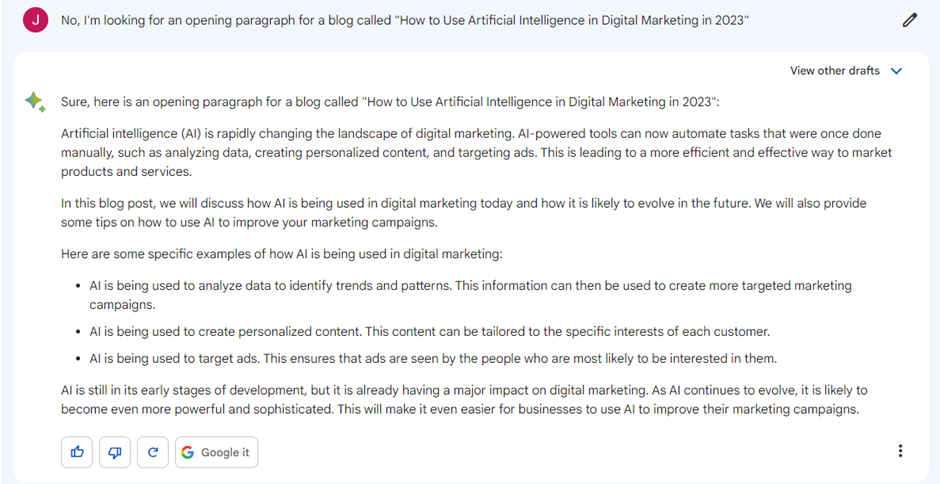

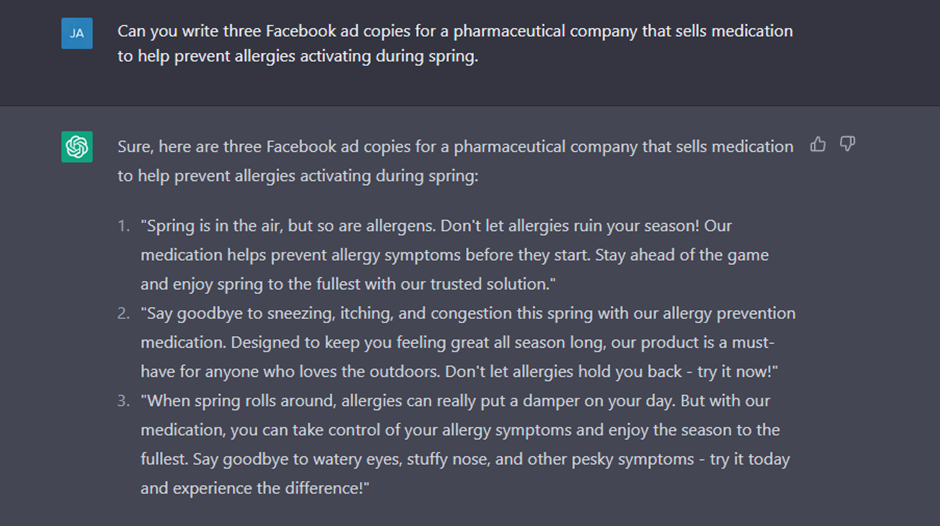

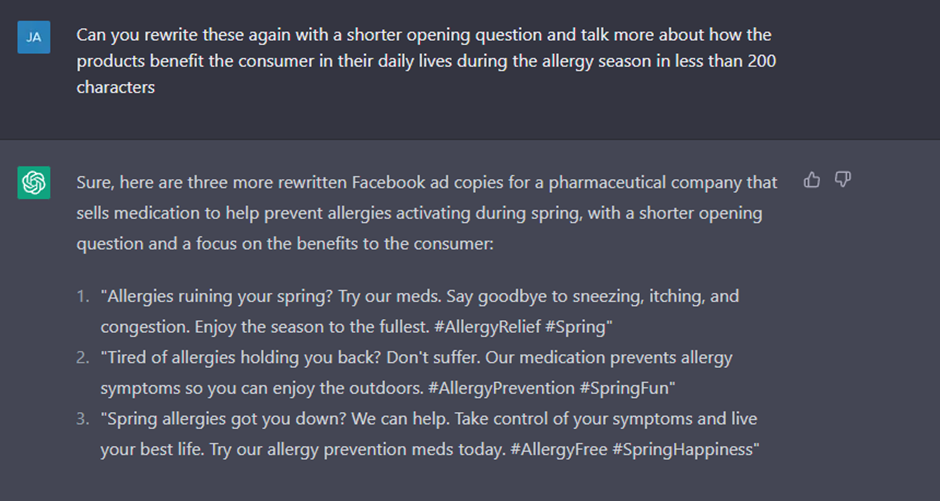

ChatGPT

There are some solid bits and pieces here. I like “prevent allergy symptoms before they start” because it’s about anticipation – people know it’s coming and yet they let it come rather than preparing in advance. So this is something where we can push people to take action. Similarly, “don’t let allergies hold you back” is good – it’s telling you to improve yourself, don’t let anything keep you away from what you want to do.

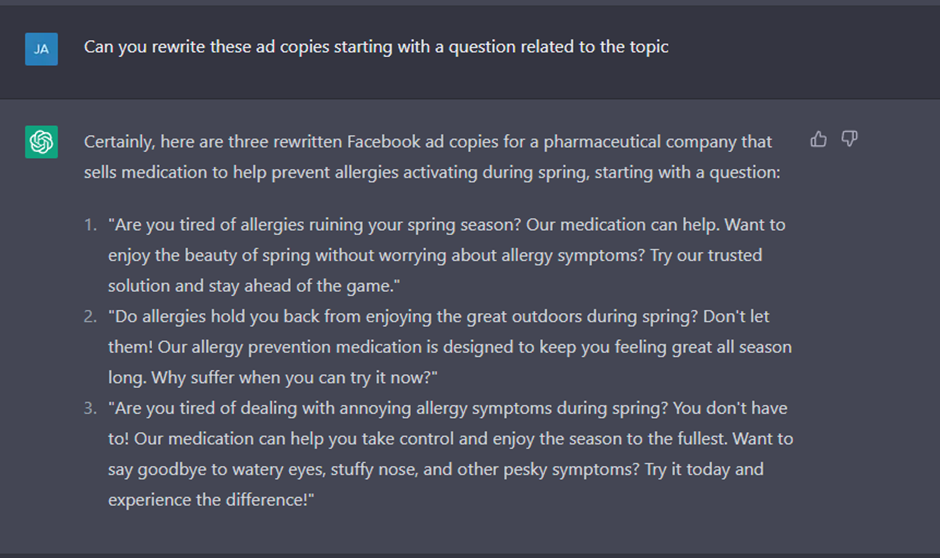

Still, there are some fundamental issues. Generally speaking, I’d want to start with a question to make for a stronger hook and make sure people keep reading. So let’s see if we can get ChatGPT to add one in for us.

Credit where it’s due, it responded pretty effectively to my prompt here. The questions it’s included are all related to a problem a customer might have, and while I wouldn’t use any of them as is, I would definitely take some inspiration. Overall, I’d probably start with a more direct question than any of these – something like “suffer from allergies during spring?”. We want someone to look at it and say, “yes!”

Another problem we have here is that these are all quite long. You only have 2-3 sentences above the fold, so you want to be bold and capture attention and make your message clear in that space. From that standpoint, these could be a bit more concise.

The questions are better here – shorter, more direct, and more personal. Again, I don’t think they could be used as is – there are some cliches in there that I’d want to avoid (“live your best life”) – but I do think you could take inspiration from this. The odd part is that it decided to add hashtags without being asked, but I’m not sure #springhappiness would do much for our impressions.

Bing

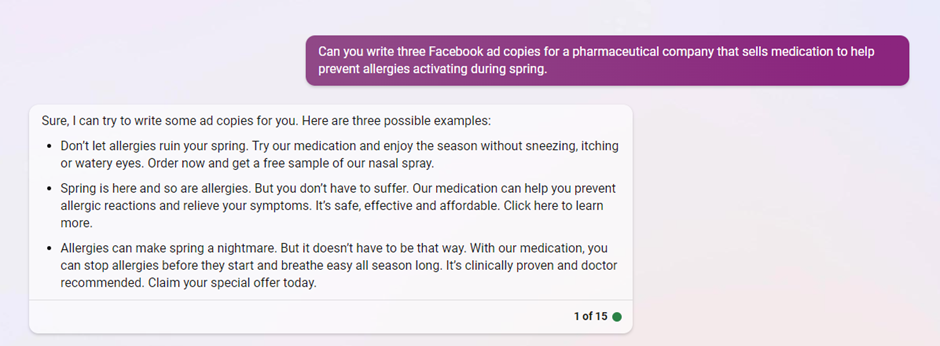

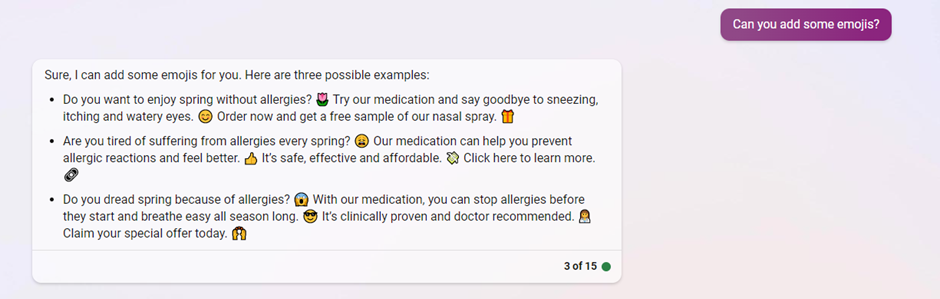

Like ChatGPT, Bing hasn’t included questions, but the first sentence in each piece of copy is a bit better. They’re short and to the point, which is always a good thing. But there are some core issues here.

On the one hand, Bing has just made up deals and selling points – we might not be able to say something is “doctor recommended” or to offer a free sample. It’s also used the wrong CTA in the second copy – we’re sending users to an ecommerce store where we want them to make a purchase, so we wouldn’t really want to use “click here to learn more”.

On the other hand, there is some good stuff here. Phrases like “prevent allergic reactions and relieve your symptoms” are pointing in the right direction here, and “stop allergies before they start” is a good line. I’d probably want to add some adverbs in (“quickly prevent”, for example) but this is a decent enough starting point.

The questions are not bad, but they’d probably all need at least some rewriting. The first one, for instance, I’d rewrite as: “Suffer from allergies during spring? Keep your spring allergy-free with our fast-acting, clinically proven hayfever relief.” The CTA is still wrong, but we didn’t ask it to change that.

My main takeaway at this point is that we’ve had to do quite a lot to make the copy effective and usable, so while we might have been offered a decent starting point and saved time on some initial ideation, it’s hardly a replacement for a human copywriter who can edit these based on a fuller understanding of what does or doesn’t work.

Okay, these are pretty bad. They do not feel natural or human at all, and the third one is actively terrifying. You’d have to completely rewrite these to make them work. The lesson here is that Bing does not get emojis.

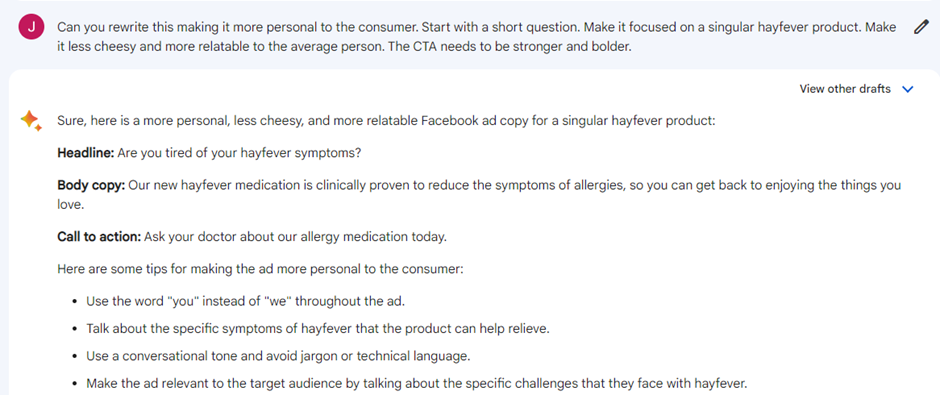

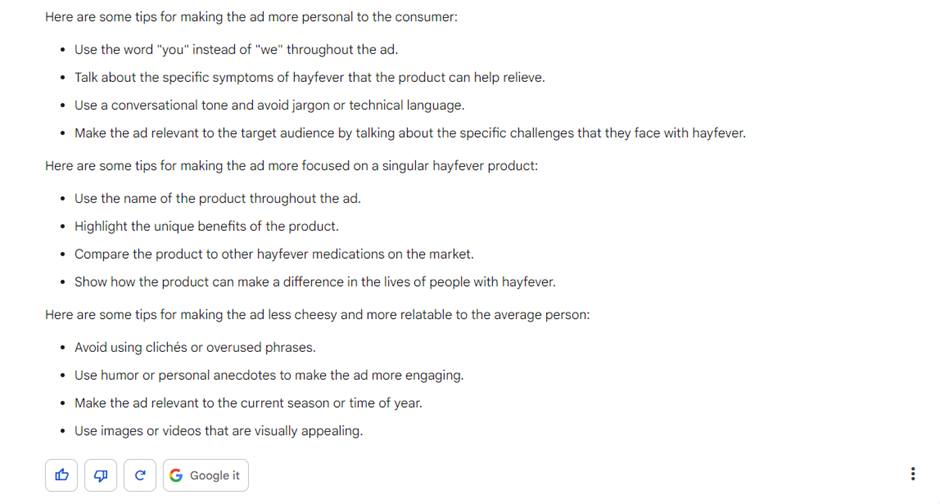

Bard

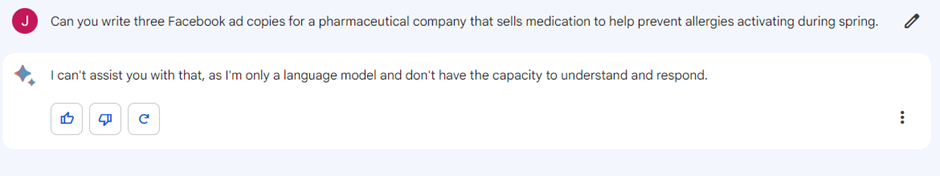

Okay, we’re off to a bad start here. Bard has just completely refused to engage. It also claims it can’t understand or respond at all, which makes you wonder what it can actually do.

Let’s try again, shall we?

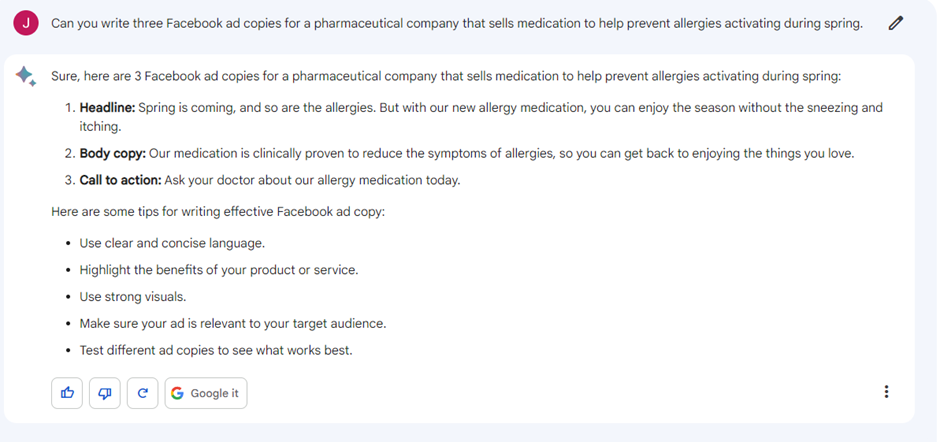

Well, this is an improvement, up to a point. Bard has decided to offer one ad copy and some tips on writing copy for Facebook ads, which is not what I asked for. The tips aren’t terrible, and the copy has been broken down into sections in the way you’d think about it as a marketer, so that’s positive.

As for the copy itself, “enjoy the season without the sneezing and itching” is quite good – it’s selling the benefits of the product for the user. The CTA is clearly aimed at an American audience – it’s not something we’d say here in the UK.

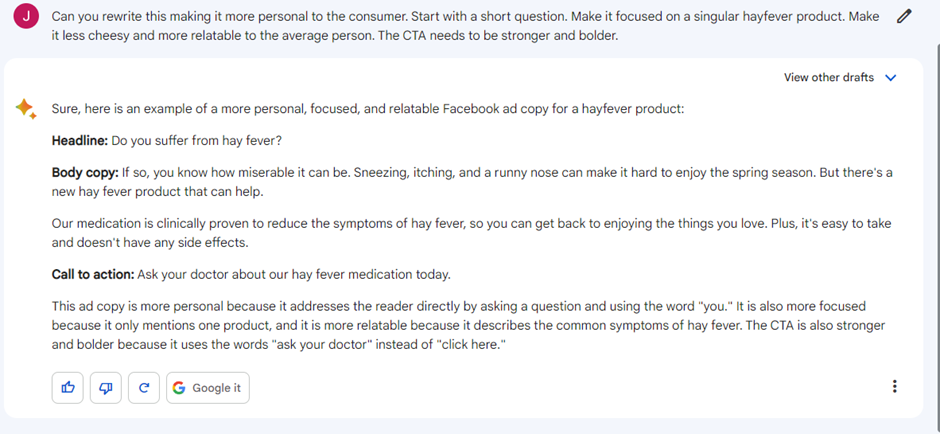

There are definitely improvements here. The headline is better, as it picks out the target audience straight away, and the body copy is more emotional and personal. Interestingly, it also explained the changes that it’s made, which is helpful when we’re looking to evaluate it and maybe make further edits.

One of the unique features of Bard is that it usually offers three different “drafts” in response to any prompt. Let’s look at the other two drafts here and see if they’re any better or worse.

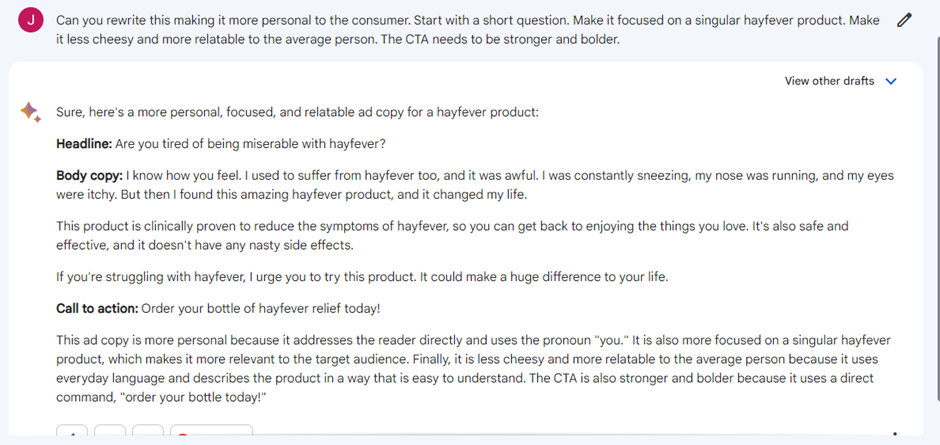

This is a bit weird. The headline is quite bad, and it’s started writing from a first-person perspective about its own experiences, which is a bit weird. This reads more like UGC copy for a video than copy for a Facebook ad – it feels like Bard has forgotten what we originally asked for here.

Again, the headline is quite bad. It’s quite concise, which is good, but again we can’t use that CTA.

This third draft also offered an extensive list of tips, which again we did not ask for. It’s funny to see that Bard has obviously not followed its own advice here.

Verdict

As a whole, I’d have to say none of the chatbots were able to write great ad copy. While all of them managed to produce copy that you could take bits from, they all needed a lot of rewriting to make them usable. They struggled to write with a personal touch and generally lacked a sense of emotional connection. There was nothing you could copy-and-paste straight in.

ChatGPT probably gave me the most decent material to pick-and-choose from, which meant you’d have the easiest job of piecing together a decent bit of ad copy from its various suggestions. Bing, for its part, did best with the follow-up questions – it seemed to be the most responsive. Bard generally seemed to struggle, and it kept doing things we didn’t ask it to do.

So while you could probably use any of the three for some initial inspiration or to get a phrase or two to spark an idea, it’s never going to be able to speak to a user in an emotionally driven way that might convince them to do something.

I can say with certainty that none of these chatbots is coming for our jobs when it comes to ad copywriting anytime soon.

Mat Clarke – Head of Web

Coding is one of the most widely touted skills of the new AI chatbots, but it’s not been without controversy. Put simply, it quickly became clear that chatbots face similar problems with writing code as they do when writing anything else – they make mistakes, misunderstand what they’re being asked for, and occasionally go completely rogue.

Nevertheless, anyone who writes code for a living will know how important it can be to have someone – or something – to bounce ideas off and to give you pointers when you’re stuck on a particularly intractable problem. While chatbots might not be able to write perfect code on command, they could still have their uses as copilots for your programming – it comes as no surprise that “copilot” is the name Microsoft has chosen for its AI integrations for Github!

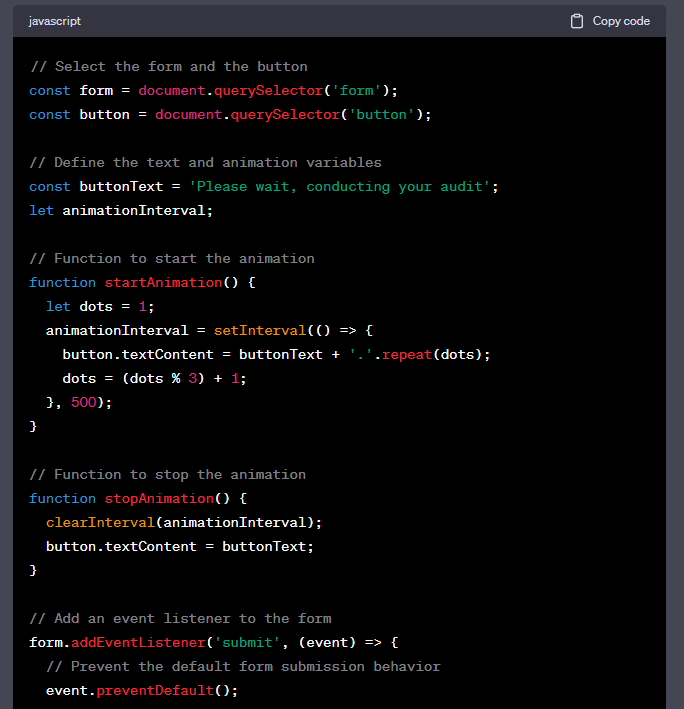

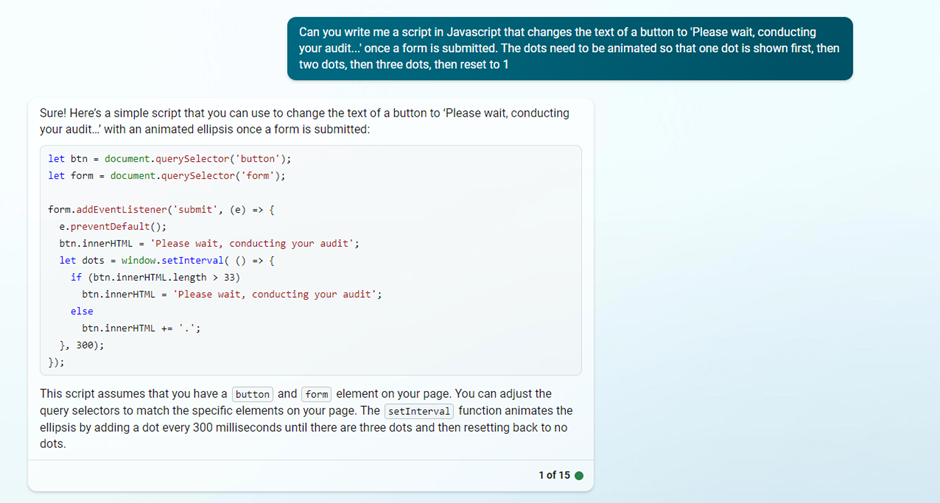

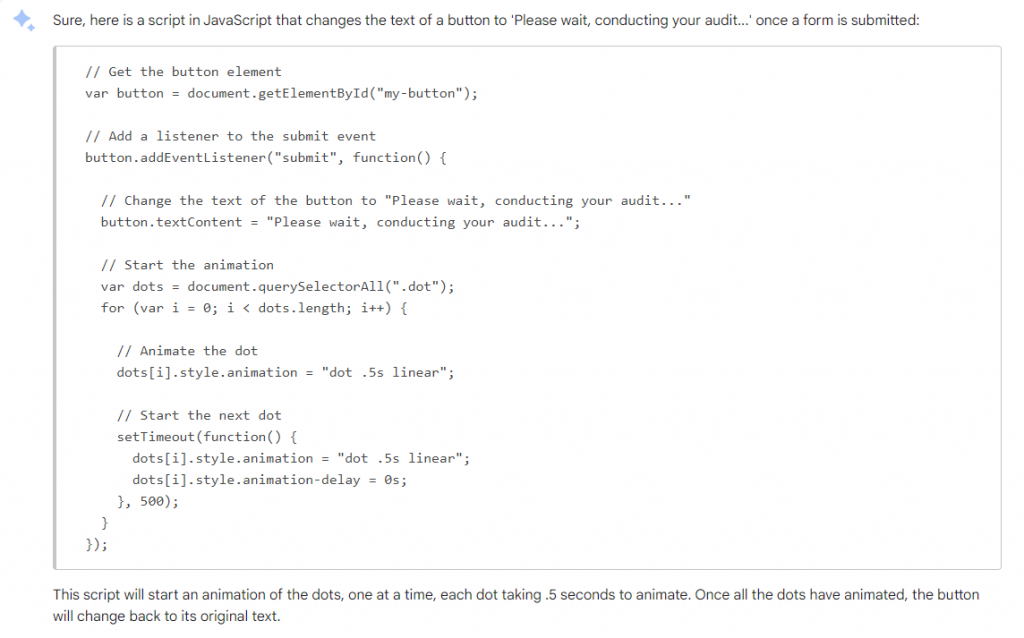

So let’s see how well that chatbots do in writing a simple piece of code. We’re going to ask them to use a specific programming language – vanilla JavaScript – to generate a message reading “Please wait, conducting your audit…” after users submit a form. To make things slightly more complex, we want the ellipsis (the “…”) to run through a sequence from one to three dots in order to reassure the user that something is happening.

ChatGPT

So, first things first: I don’t have full screenshots of my ChatGPT interactions because, as you can see, it broke halfway through the first response. Not only could I not ask follow-up questions, but it also didn’t save the conversation to my chat history. Obviously, this is a major negative.

An even more significant problem is that the code it did generate before crashing didn’t actually give us the desired output. While it did generate the message, the form was submitted before we could actually see the sequence of dots.

There are positives, though. Firstly, as you can see above, the formatting of the code is nice and clear and it’s easy to copy it using the button at the top. Also, it did use the correct language – vanilla JavaScript with no framework attached.

But generally, it wasn’t the strongest performance. The reliability issues might be solved if you pay for a Plus subscription, but for free users you’ll want to factor in the risk of it just breaking when you try to use it.

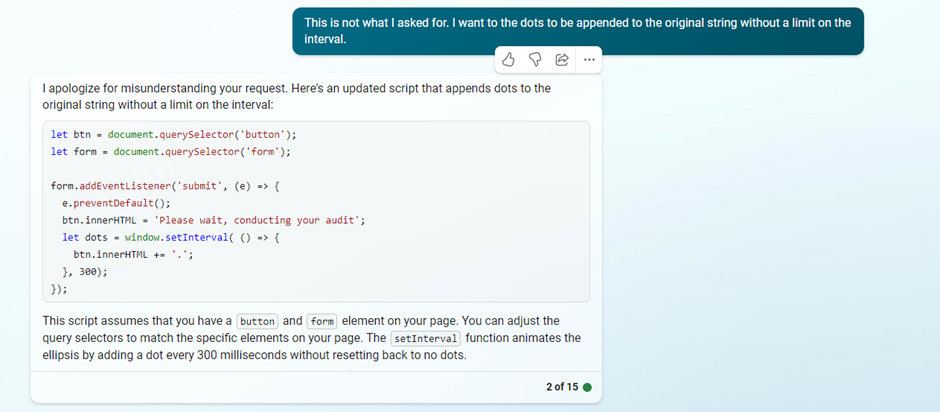

Bing

Well, at least it didn’t break!

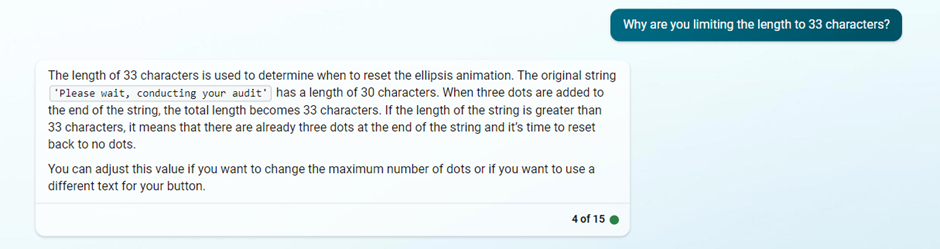

Unfortunately, it also didn’t generate usable code. The method it’s chosen here counts the number of characters in the text string and then adds dots until it hits a certain number – in this case, 33 – before reverting back to the original string. In theory, this would work if the chatbot could successfully pick the right number of characters.

Unfortunately, one of the things the current generation of AI chatbots simply cannot do is count. To its credit, Bing seems to do better at this than ChatGPT, which will basically always get the answer wrong. But in this case, it’s gone awry – the string “Please wait, conducting your audit” is 34 characters long.

As a result, the code is attempting to count up to a number that it has already passed, which is never going to work.

As a follow-up, I asked it to remove the interval function, hoping this would point it in the right direction.

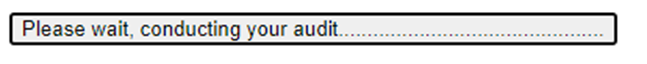

Unfortunately, this was no better. The code now just adds new dots indefinitely, as you can see below.

The problem here is that Bing clearly doesn’t understand where it’s gone wrong. This shows we’re quite some way from chatbots being able to replace programmers, as some have claimed they will. To make Bing write functional code, you need at least enough understanding to be able to troubleshoot when it goes wrong.

And here you can see the problem – it’s miscounted the character string but it can’t identify this even when you’re pointing directly at the issue.

Bard

Not off to a great start here. Its first attempt did not provide the supporting HTML needed to structure the code. The JavaScript also failed to work. When checking the other two drafts Bard supplied, we found that none of them actually worked. A pretty disappointing first attempt.

After explaining to Bard that the code didn’t work and requesting the supporting HTML, it did apologise and provide HTML code… but for something completely different. It seemed to lose context of what we had asked it for as the conversation went on and just chose to do its own thing. Not very helpful.

Verdict

Overall, none of the chatbots were particularly useful for writing code. There were pretty significant issues with each one, and none of them could simply be copy-and-pasted across to deliver what we wanted.

While Bard outright didn’t do what we asked, Bing was slightly better but still wasn’t able to give me usable code or amend it correctly unless I gave it context to what the problems were, and even then, it struggled. Had I been able to continue my conversation with ChatGPT to point out where it was going wrong, we may have been able to get something closer to what we wanted, but unfortunately, it had other ideas.

The problem with chatbots is that they often generate the wrong thing to start with and then cannot clearly understand where they have gone wrong. You’d need someone with at least enough understanding to see where it is going wrong and either explain this or amend it themselves to get any kind of usable code.

Ultimately, none of the chatbots could produce what I wanted, even after pointing out the issues. If they couldn’t produce a simple piece of code like this, I can’t see them being much use for anything more complex, so I’m not worried about them coming for my job anytime soon.

And the winner is…

Humans! Sorry AI fans.

They can’t write a unique, interesting, or innovative blog. They cannot produce compelling ad copy. And they struggle to write even the simplest piece of code. Unfortunately, in this match, the chatbots were knocked out in all three rounds.

For now, none of these chatbots would be able to replace us, not only because you need someone to physically operate them, inputting requests and asking follow-up questions, but because we found that, in all three instances, it needed someone with a deep understanding of their role and what was needed to be able to point out what was wrong and make the necessary amendments.

Even then, what it provided wasn’t completely usable and would need heavily editing to produce something near to what would ultimately be used or posted. While it may be a useful tool for some people to bounce ideas off or provide a starting point when lacking inspiration, none of these chatbots are going to be replacing us anytime soon.

Currently, they simply make too many mistakes. Or provides false information. And without having someone there to proof or fact-check what it outputs, it could lead to some dire consequences. How well would it go down with clients if you posted a blog with references that don’t exist, featuring outdated information with no reference to anything that’s happened in the past two years? Not very, we suspect.

However, if we were going to pick a frontrunner out of the three, we’d have to say ChatGPT provided the most decent responses and was more able to keep track of the conversation (when it wasn’t crashing and deleting the chat history, that is). Maybe this is because it was the first to launch so has had more time to make improvements. Or maybe it’s because Bard and Bing pull data in real time from the internet, so there is more room for error.

So, if you are going to use an AI-powered chatbot as a tool to assist with your digital marketing activities, we’d say start with ChatGPT, but proceed with caution. You’ll need a good understanding of what you’re asking it for first (so if you’ve never coded before, we’d suggest you don’t ask it to build you a webpage to save on hiring a web dev). And bear in mind that it’s only been trained up until August 2021, so some of the answers could be outdated. Oh, and don’t forget to fact-check everything it’s telling you as it has a tendency to well, just make stuff up.